Documentation

¶

Documentation

¶

Overview ¶

Package lingua accurately detects the natural language of written text, be it long or short.

What this library does ¶

Its task is simple: It tells you which language some text is written in. This is very useful as a preprocessing step for linguistic data in natural language processing applications such as text classification and spell checking. Other use cases, for instance, might include routing e-mails to the right geographically located customer service department, based on the e-mails' languages.

Why this library exists ¶

Language detection is often done as part of large machine learning frameworks or natural language processing applications. In cases where you don't need the full-fledged functionality of those systems or don't want to learn the ropes of those, a small flexible library comes in handy.

So far, the only other comprehensive open source library in the Go ecosystem for this task is Whatlanggo (https://github.com/abadojack/whatlanggo). Unfortunately, it has two major drawbacks:

1. Detection only works with quite lengthy text fragments. For very short text snippets such as Twitter messages, it does not provide adequate results.

2. The more languages take part in the decision process, the less accurate are the detection results.

Lingua aims at eliminating these problems. It nearly does not need any configuration and yields pretty accurate results on both long and short text, even on single words and phrases. It draws on both rule-based and statistical methods but does not use any dictionaries of words. It does not need a connection to any external API or service either. Once the library has been downloaded, it can be used completely offline.

Supported languages ¶

Compared to other language detection libraries, Lingua's focus is on quality over quantity, that is, getting detection right for a small set of languages first before adding new ones. Currently, 75 languages are supported. They are listed as variants of type Language.

How good it is ¶

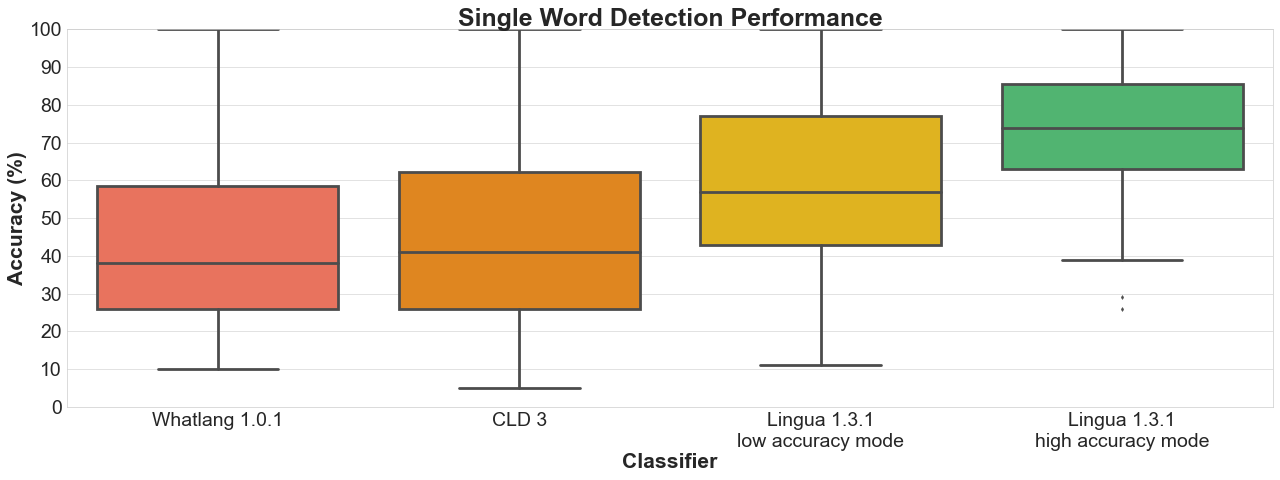

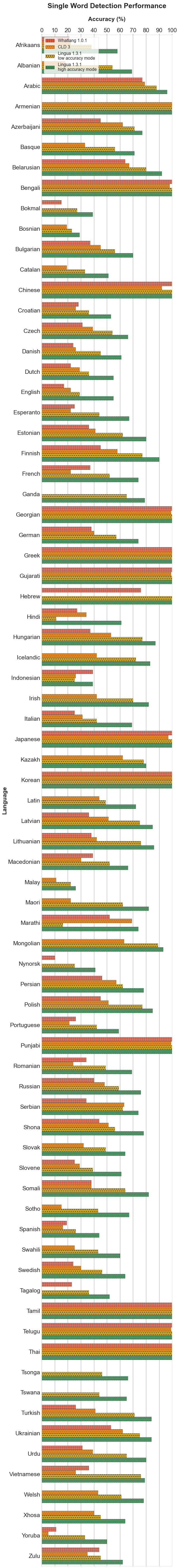

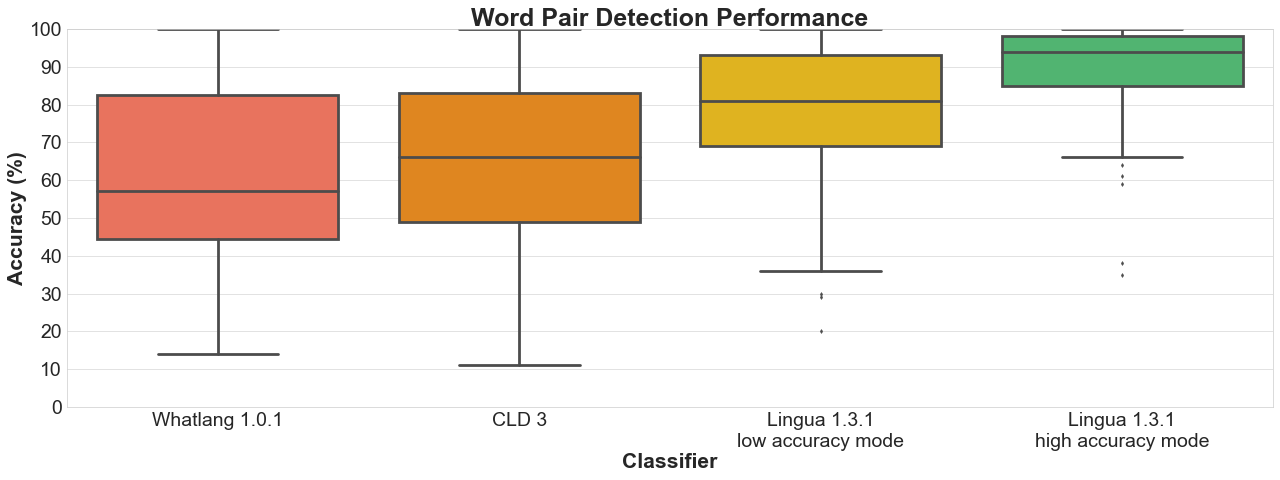

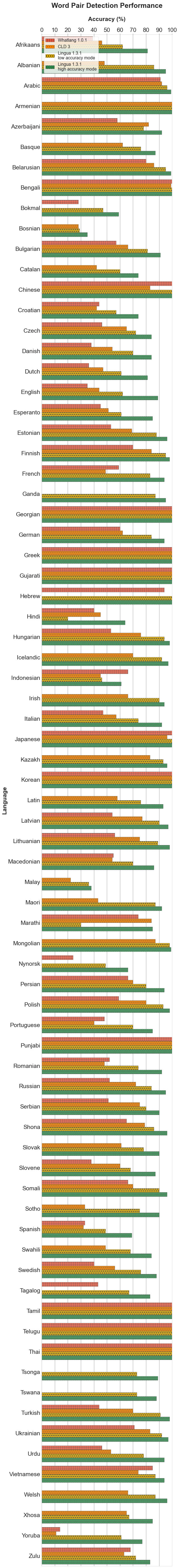

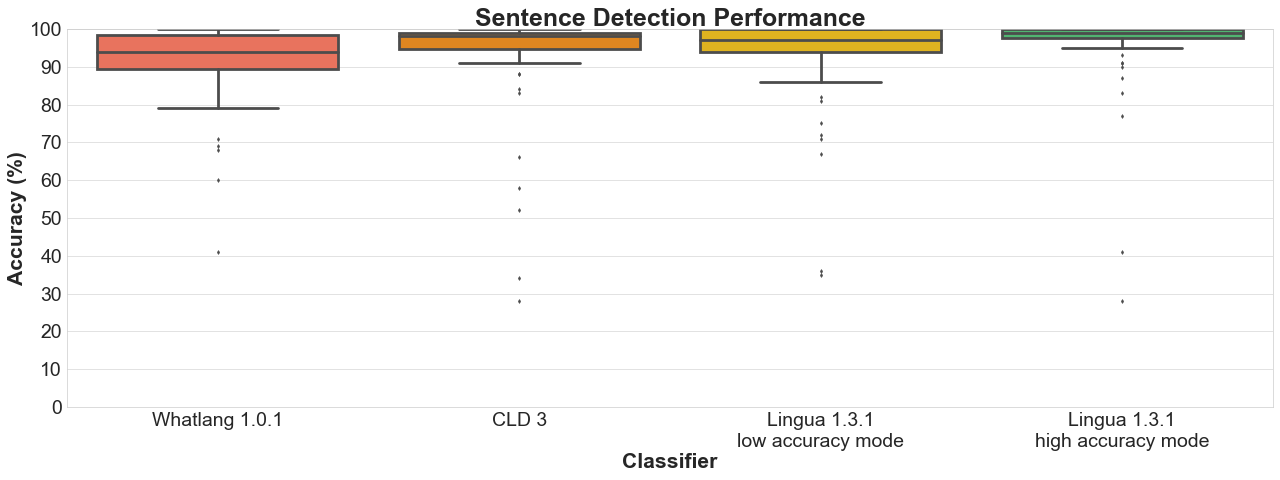

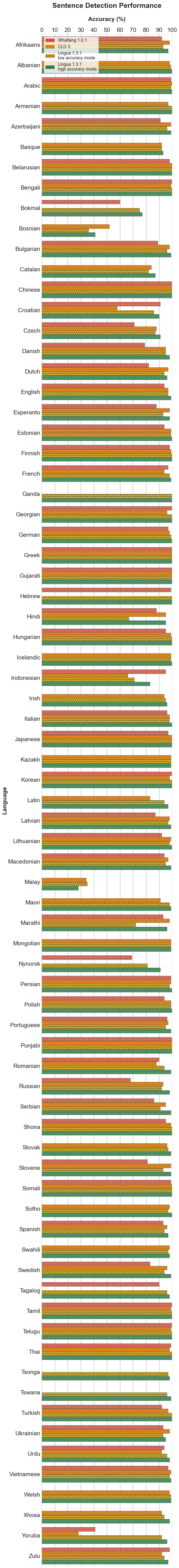

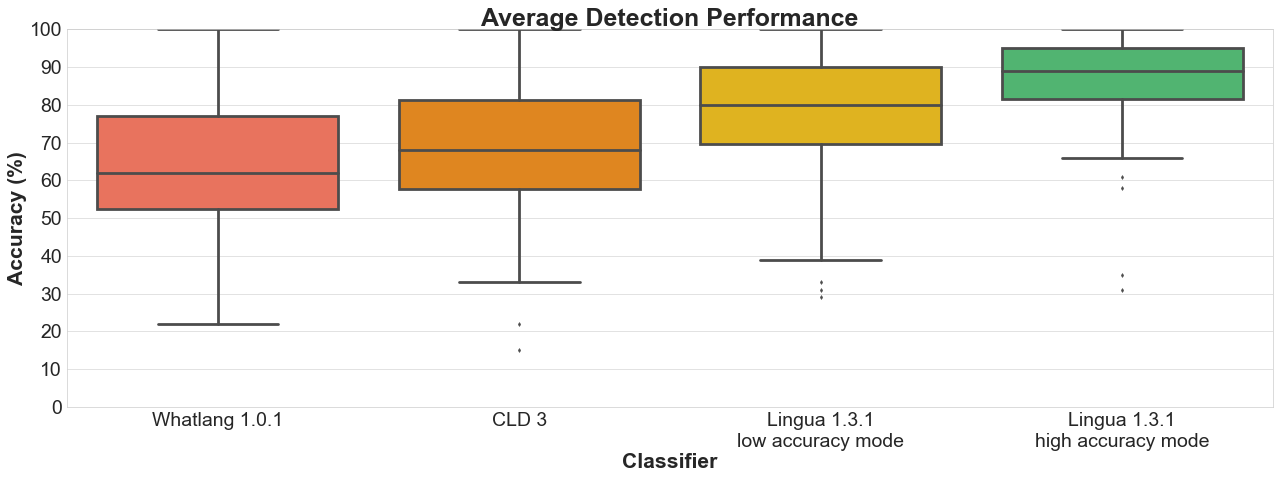

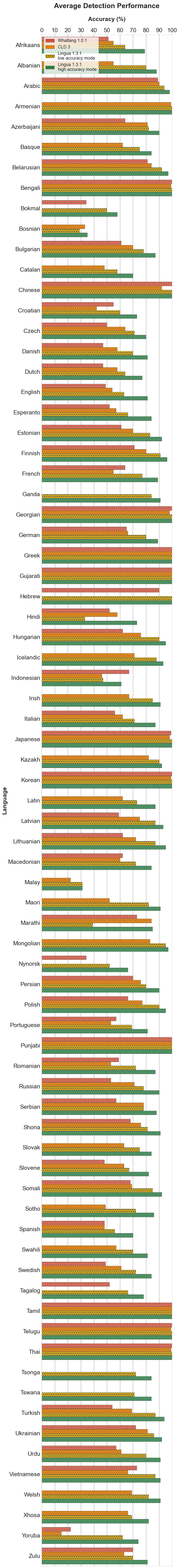

Lingua is able to report accuracy statistics for some bundled test data available for each supported language. The test data for each language is split into three parts:

1. a list of single words with a minimum length of 5 characters

2. a list of word pairs with a minimum length of 10 characters

3. a list of complete grammatical sentences of various lengths

Both the language models and the test data have been created from separate documents of the Wortschatz corpora (https://wortschatz.uni-leipzig.de) offered by Leipzig University, Germany. Data crawled from various news websites have been used for training, each corpus comprising one million sentences. For testing, corpora made of arbitrarily chosen websites have been used, each comprising ten thousand sentences. From each test corpus, a random unsorted subset of 1000 single words, 1000 word pairs and 1000 sentences has been extracted, respectively.

Given the generated test data, I have compared the detection results of Lingua, and Whatlanggo running over the data of Lingua's supported 75 languages. Additionally, I have added Google's CLD3 (https://github.com/google/cld3/) to the comparison with the help of the gocld3 bindings (https://github.com/jmhodges/gocld3). Languages that are not supported by CLD3 or Whatlanggo are simply ignored during the detection process. Lingua clearly outperforms its contenders.

Why it is better than other libraries ¶

Every language detector uses a probabilistic n-gram (https://en.wikipedia.org/wiki/N-gram) model trained on the character distribution in some training corpus. Most libraries only use n-grams of size 3 (trigrams) which is satisfactory for detecting the language of longer text fragments consisting of multiple sentences. For short phrases or single words, however, trigrams are not enough. The shorter the input text is, the less n-grams are available. The probabilities estimated from such few n-grams are not reliable. This is why Lingua makes use of n-grams of sizes 1 up to 5 which results in much more accurate prediction of the correct language.

A second important difference is that Lingua does not only use such a statistical model, but also a rule-based engine. This engine first determines the alphabet of the input text and searches for characters which are unique in one or more languages. If exactly one language can be reliably chosen this way, the statistical model is not necessary anymore. In any case, the rule-based engine filters out languages that do not satisfy the conditions of the input text. Only then, in a second step, the probabilistic n-gram model is taken into consideration. This makes sense because loading less language models means less memory consumption and better runtime performance.

In general, it is always a good idea to restrict the set of languages to be considered in the classification process using the respective api methods. If you know beforehand that certain languages are never to occur in an input text, do not let those take part in the classifcation process. The filtering mechanism of the rule-based engine is quite good, however, filtering based on your own knowledge of the input text is always preferable.

Example (Basic) ¶

languages := []lingua.Language{

lingua.English,

lingua.French,

lingua.German,

lingua.Spanish,

}

detector := lingua.NewLanguageDetectorBuilder().

FromLanguages(languages...).

Build()

if language, exists := detector.DetectLanguageOf("languages are awesome"); exists {

fmt.Println(language)

}

Output: English

Example (BuilderApi) ¶

There might be classification tasks where you know beforehand that your language data is definitely not written in Latin, for instance. The detection accuracy can become better in such cases if you exclude certain languages from the decision process or just explicitly include relevant languages.

// Include all languages available in the library. lingua.NewLanguageDetectorBuilder().FromAllLanguages() // Include only languages that are not yet extinct (= currently excludes Latin). lingua.NewLanguageDetectorBuilder().FromAllSpokenLanguages() // Include only languages written with Cyrillic script. lingua.NewLanguageDetectorBuilder().FromAllLanguagesWithCyrillicScript() // Exclude only the Spanish language from the decision algorithm. lingua.NewLanguageDetectorBuilder().FromAllLanguagesWithout(lingua.Spanish) // Only decide between English and German. lingua.NewLanguageDetectorBuilder().FromLanguages(lingua.English, lingua.German) // Select languages by ISO 639-1 code. lingua.NewLanguageDetectorBuilder().FromIsoCodes639_1(lingua.EN, lingua.DE) // Select languages by ISO 639-3 code. lingua.NewLanguageDetectorBuilder().FromIsoCodes639_3(lingua.ENG, lingua.DEU)

Output:

Example (ConfidenceValues) ¶

Knowing about the most likely language is nice but how reliable is the computed likelihood? And how less likely are the other examined languages in comparison to the most likely one? In the example below, a slice of ConfidenceValue is returned containing those languages which the calling instance of LanguageDetector has been built from. The entries are sorted by their confidence value in descending order. Each value is a probability between 0.0 and 1.0. The probabilities of all languages will sum to 1.0. If the language is unambiguously identified by the rule engine, the value 1.0 will always be returned for this language. The other languages will receive a value of 0.0.

languages := []lingua.Language{

lingua.English,

lingua.French,

lingua.German,

lingua.Spanish,

}

detector := lingua.NewLanguageDetectorBuilder().

FromLanguages(languages...).

Build()

confidenceValues := detector.ComputeLanguageConfidenceValues("languages are awesome")

for _, elem := range confidenceValues {

fmt.Printf("%s: %.2f\n", elem.Language(), elem.Value())

}

Output: English: 0.93 French: 0.04 German: 0.02 Spanish: 0.01

Example (EagerLoading) ¶

By default, Lingua uses lazy-loading to load only those language models on demand which are considered relevant by the rule-based filter engine. For web services, for instance, it is rather beneficial to preload all language models into memory to avoid unexpected latency while waiting for the service response. If you want to enable the eager-loading mode, you can do it as seen below. Multiple instances of LanguageDetector share the same language models in memory which are accessed asynchronously by the instances.

lingua.NewLanguageDetectorBuilder(). FromAllLanguages(). WithPreloadedLanguageModels(). Build()

Output:

Example (MinimumRelativeDistance) ¶

By default, Lingua returns the most likely language for a given input text. However, there are certain words that are spelled the same in more than one language. The word `prologue`, for instance, is both a valid English and French word. Lingua would output either English or French which might be wrong in the given context. For cases like that, it is possible to specify a minimum relative distance that the logarithmized and summed up probabilities for each possible language have to satisfy. It can be stated as seen below.

Be aware that the distance between the language probabilities is dependent on the length of the input text. The longer the input text, the larger the distance between the languages. So if you want to classify very short text phrases, do not set the minimum relative distance too high. Otherwise Unknown will be returned most of the time as in the example below. This is the return value for cases where language detection is not reliably possible.

languages := []lingua.Language{

lingua.English,

lingua.French,

lingua.German,

lingua.Spanish,

}

detector := lingua.NewLanguageDetectorBuilder().

FromLanguages(languages...).

WithMinimumRelativeDistance(0.9).

Build()

language, exists := detector.DetectLanguageOf("languages are awesome")

fmt.Println(language)

fmt.Println(exists)

Output: Unknown false

Example (MultipleLanguagesDetection) ¶

languages := []lingua.Language{

lingua.English,

lingua.French,

lingua.German,

}

detector := lingua.NewLanguageDetectorBuilder().

FromLanguages(languages...).

Build()

sentence := "Parlez-vous français? " +

"Ich spreche Französisch nur ein bisschen. " +

"A little bit is better than nothing."

for _, result := range detector.DetectMultipleLanguagesOf(sentence) {

fmt.Printf("%s: '%s'\n", result.Language(), sentence[result.StartIndex():result.EndIndex()])

}

Output: French: 'Parlez-vous français? ' German: 'Ich spreche Französisch nur ein bisschen. ' English: 'A little bit is better than nothing.'

Index ¶

- func CreateAndWriteLanguageModelFiles(inputFilePath string, outputDirectoryPath string, language Language, ...) error

- func CreateAndWriteTestDataFiles(inputFilePath string, outputDirectoryPath string, charClass string, ...) error

- type ConfidenceValue

- type DetectionResult

- type IsoCode639_1

- type IsoCode639_3

- type Language

- func AllLanguages() []Language

- func AllLanguagesWithArabicScript() []Language

- func AllLanguagesWithCyrillicScript() []Language

- func AllLanguagesWithDevanagariScript() []Language

- func AllLanguagesWithLatinScript() []Language

- func AllSpokenLanguages() (languages []Language)

- func GetLanguageFromIsoCode639_1(isoCode IsoCode639_1) Language

- func GetLanguageFromIsoCode639_3(isoCode IsoCode639_3) Language

- type LanguageDetector

- type LanguageDetectorBuilder

- type UnconfiguredLanguageDetectorBuilder

Examples ¶

Constants ¶

This section is empty.

Variables ¶

This section is empty.

Functions ¶

func CreateAndWriteLanguageModelFiles ¶

func CreateAndWriteLanguageModelFiles( inputFilePath string, outputDirectoryPath string, language Language, charClass string, ) error

CreateAndWriteLanguageModelFiles creates language model files for accuracy report generation and writes them to a directory.

`inputFilePath` is the path to a txt file used for language model creation. The assumed enconding of the txt file is UTF-8.

`outputDirectoryPath` is the path to an existing directory where the language model files are to be written.

`language` is the language for which to create the language models.

`charClass` is a regex character class such as `\\p{L}` to restrict the set of characters that the language models are built from.

An error is returned if:

- the input file path is not absolute or does not point to an existing txt file

- the input file's encoding is not UTF-8

- the output directory path is not absolute or does not point to an existing directory

Panics if the character class cannot be compiled to a valid regular expression.

func CreateAndWriteTestDataFiles ¶

func CreateAndWriteTestDataFiles( inputFilePath string, outputDirectoryPath string, charClass string, maximumLines int, ) error

CreateAndWriteTestDataFiles creates test data files for accuracy report generation and writes them to a directory.

`inputFilePath` is the path to a txt file used for test data creation. The assumed enconding of the txt file is UTF-8.

`outputDirectoryPath` is the path to an existing directory where the test data files are to be written.

`charClass` is a regex character class such as `\\p{L}` to restrict the set of characters that the language models are built from.

`maximumLines` is the maximum number of lines each test data file should have.

An error is returned if:

- the input file path is not absolute or does not point to an existing txt file

- the input file's encoding is not UTF-8

- the output directory path is not absolute or does not point to an existing directory

Panics if the character class cannot be compiled to a valid regular expression.

Types ¶

type ConfidenceValue ¶

type ConfidenceValue interface {

// Language returns the language being part of this ConfidenceValue.

Language() Language

// Value returns a language's confidence value which lies between 0.0 and 1.0.

Value() float64

}

ConfidenceValue is the interface describing a language's confidence value that is computed by LanguageDetector.ComputeLanguageConfidenceValues.

type DetectionResult ¶ added in v1.2.0

type DetectionResult interface {

// StartIndex returns the start index of the identified single-language substring.

StartIndex() int

// EndIndex returns the end index of the identified single-language substring.

EndIndex() int

// Language returns the language being part of this DetectionResult.

Language() Language

}

DetectionResult is the interface describing a contiguous single-language text section within a possibly mixed-language text. It is computed by LanguageDetector.DetectMultipleLanguagesOf.

type IsoCode639_1 ¶

type IsoCode639_1 int

IsoCode639_1 is the type used for enumerating the ISO 639-1 code representations of the supported languages.

const ( // AF is the ISO 639-1 code for Afrikaans. AF IsoCode639_1 = iota // AR is the ISO 639-1 code for Arabic. AR // AZ is the ISO 639-1 code for Azerbaijani. AZ // BE is the ISO 639-1 code for Belarusian. BE // BG is the ISO 639-1 code for Bulgarian. BG // BN is the ISO 639-1 code for Bengali. BN // BS is the ISO 639-1 code for Bosnian. BS // CA is the ISO 639-1 code for Catalan. CA // CS is the ISO 639-1 code for Czech. CS // CY is the ISO 639-1 code for Welsh. CY // DA is the ISO 639-1 code for Danish. DA // DE is the ISO 639-1 code for German. DE // EL is the ISO 639-1 code for Greek. EL // EN is the ISO 639-1 code for English. EN // EO is the ISO 639-1 code for Esperanto. EO // ES is the ISO 639-1 code for Spanish. ES // ET is the ISO 639-1 code for Estonian. ET // EU is the ISO 639-1 code for Basque. EU // FA is the ISO 639-1 code for Persian. FA // FI is the ISO 639-1 code for Finnish. FI // FR is the ISO 639-1 code for French. FR // GA is the ISO 639-1 code for Irish. GA // GU is the ISO 639-1 code for Gujarati. GU // HE is the ISO 639-1 code for Hebrew. HE // HI is the ISO 639-1 code for Hindi. HI // HR is the ISO 639-1 code for Croatian. HR // HU is the ISO 639-1 code for Hungarian. HU // HY is the ISO 639-1 code for Armenian. HY // ID is the ISO 639-1 code for Indonesian. ID // IS is the ISO 639-1 code for Icelandic. IS // IT is the ISO 639-1 code for Italian. IT // JA is the ISO 639-1 code for Japanese. JA // KA is the ISO 639-1 code for Georgian. KA // KK is the ISO 639-1 code for Kazakh. KK // KO is the ISO 639-1 code for Korean. KO // LA is the ISO 639-1 code for Latin. LA // LG is the ISO 639-1 code for Ganda. LG // LT is the ISO 639-1 code for Lithuanian. LT // LV is the ISO 639-1 code for Latvian. LV // MI is the ISO 639-1 code for Maori. MI // MK is the ISO 639-1 code for Macedonian. MK // MN is the ISO 639-1 code for Mongolian. MN // MR is the ISO 639-1 code for Marathi. MR // MS is the ISO 639-1 code for Malay. MS // NB is the ISO 639-1 code for Bokmal. NB // NL is the ISO 639-1 code for Dutch. NL // NN is the ISO 639-1 code for Nynorsk. NN // PA is the ISO 639-1 code for Punjabi. PA // PL is the ISO 639-1 code for Polish. PL // PT is the ISO 639-1 code for Portuguese. PT // RO is the ISO 639-1 code for Romanian. RO // RU is the ISO 639-1 code for Russian. RU // SK is the ISO 639-1 code for Slovak. SK // SL is the ISO 639-1 code for Slovene. SL // SN is the ISO 639-1 code for Shona. SN // SO is the ISO 639-1 code for Somali. SO // SQ is the ISO 639-1 code for Albanian. SQ // SR is the ISO 639-1 code for Serbian. SR // ST is the ISO 639-1 code for Sotho. ST // SV is the ISO 639-1 code for Swedish. SV // SW is the ISO 639-1 code for Swahili. SW // TA is the ISO 639-1 code for Tamil. TA // TE is the ISO 639-1 code for Telugu. TE // TH is the ISO 639-1 code for Thai. TH // TL is the ISO 639-1 code for Tagalog. TL // TN is the ISO 639-1 code for Tswana. TN // TR is the ISO 639-1 code for Turkish. TR // TS is the ISO 639-1 code for Tsonga. TS // UK is the ISO 639-1 code for Ukrainian. UK // UR is the ISO 639-1 code for Urdu. UR // VI is the ISO 639-1 code for Vietnamese. VI // XH is the ISO 639-1 code for Xhosa. XH // YO is the ISO 639-1 code for Yoruba. YO // ZH is the ISO 639-1 code for Chinese. ZH // ZU is the ISO 639-1 code for Zulu. ZU // UnknownIsoCode639_1 is the ISO 639-1 code for Unknown. UnknownIsoCode639_1 )

func GetIsoCode639_1FromValue ¶ added in v1.4.0

func GetIsoCode639_1FromValue(name string) IsoCode639_1

GetIsoCode639_1FromValue returns the ISO 639-1 code for the given name.

func (IsoCode639_1) String ¶

func (i IsoCode639_1) String() string

type IsoCode639_3 ¶

type IsoCode639_3 int

IsoCode639_3 is the type used for enumerating the ISO 639-3 code representations of the supported languages.

const ( // AFR is the ISO 639-3 code for Afrikaans. AFR IsoCode639_3 = iota // ARA is the ISO 639-3 code for Arabic. ARA // AZE is the ISO 639-3 code for Azerbaijani. AZE // BEL is the ISO 639-3 code for Belarusian. BEL // BEN is the ISO 639-3 code for Bengali. BEN // BOS is the ISO 639-3 code for Bosnian. BOS // BUL is the ISO 639-3 code for Bulgarian. BUL // CAT is the ISO 639-3 code for Catalan. CAT // CES is the ISO 639-3 code for Czech. CES // CYM is the ISO 639-3 code for Welsh. CYM // DAN is the ISO 639-3 code for Danish. DAN // DEU is the ISO 639-3 code for German. DEU // ELL is the ISO 639-3 code for Greek. ELL // ENG is the ISO 639-3 code for English. ENG // EPO is the ISO 639-3 code for Esperanto. EPO // EST is the ISO 639-3 code for Estonian. EST // EUS is the ISO 639-3 code for Basque. EUS // FAS is the ISO 639-3 code for Persian. FAS // FIN is the ISO 639-3 code for Finnish. FIN // FRA is the ISO 639-3 code for French. FRA // GLE is the ISO 639-3 code for Irish. GLE // GUJ is the ISO 639-3 code for Gujarati. GUJ // HEB is the ISO 639-3 code for Hebrew. HEB // HIN is the ISO 639-3 code for Hindi. HIN // HRV is the ISO 639-3 code for Croatian. HRV // HUN is the ISO 639-3 code for Hungarian. HUN // HYE is the ISO 639-3 code for Armenian. HYE // IND is the ISO 639-3 code for Indonesian. IND // ISL is the ISO 639-3 code for Icelandic. ISL // ITA is the ISO 639-3 code for Italian. ITA // JPN is the ISO 639-3 code for Japanese. JPN // KAT is the ISO 639-3 code for Georgian. KAT // KAZ is the ISO 639-3 code for Kazakh. KAZ // KOR is the ISO 639-3 code for Korean. KOR // LAT is the ISO 639-3 code for Latin. LAT // LAV is the ISO 639-3 code for Latvian. LAV // LIT is the ISO 639-3 code for Lithuanian. LIT // LUG is the ISO 639-3 code for Ganda. LUG // MAR is the ISO 639-3 code for Marathi. MAR // MKD is the ISO 639-3 code for Macedonian. MKD // MON is the ISO 639-3 code for Mongolian. MON // MRI is the ISO 639-3 code for Maori. MRI // MSA is the ISO 639-3 code for Malay. MSA // NLD is the ISO 639-3 code for Dutch. NLD // NNO is the ISO 639-3 code for Nynorsk. NNO // NOB is the ISO 639-3 code for Bokmal. NOB // PAN is the ISO 639-3 code for Punjabi. PAN // POL is the ISO 639-3 code for Polish. POL // POR is the ISO 639-3 code for Portuguese. POR // RON is the ISO 639-3 code for Romanian. RON // RUS is the ISO 639-3 code for Russian. RUS // SLK is the ISO 639-3 code for Slovak. SLK // SLV is the ISO 639-3 code for Slovene. SLV // SNA is the ISO 639-3 code for Shona. SNA // SOM is the ISO 639-3 code for Somali. SOM // SOT is the ISO 639-3 code for Sotho. SOT // SPA is the ISO 639-3 code for Spanish. SPA // SQI is the ISO 639-3 code for Albanian. SQI // SRP is the ISO 639-3 code for Serbian. SRP // SWA is the ISO 639-3 code for Swahili. SWA // SWE is the ISO 639-3 code for Swedish. SWE // TAM is the ISO 639-3 code for Tamil. TAM // TEL is the ISO 639-3 code for Telugu. TEL // TGL is the ISO 639-3 code for Tagalog. TGL // THA is the ISO 639-3 code for Thai. THA // TSN is the ISO 639-3 code for Tswana. TSN // TSO is the ISO 639-3 code for Tsonga. TSO // TUR is the ISO 639-3 code for Turkish. TUR // UKR is the ISO 639-3 code for Ukrainian. UKR // URD is the ISO 639-3 code for Urdu. URD // VIE is the ISO 639-3 code for Vietnamese. VIE // XHO is the ISO 639-3 code for Xhosa. XHO // YOR is the ISO 639-3 code for Yoruba. YOR // ZHO is the ISO 639-3 code for Chinese. ZHO // ZUL is the ISO 639-3 code for Zulu. ZUL // UnknownIsoCode639_3 is the ISO 639-3 code for Unknown. UnknownIsoCode639_3 )

func GetIsoCode639_3FromValue ¶ added in v1.4.0

func GetIsoCode639_3FromValue(name string) IsoCode639_3

GetIsoCode639_3FromValue returns the ISO 639-3 code for the given name.

func (IsoCode639_3) String ¶

func (i IsoCode639_3) String() string

type Language ¶

type Language int

Language is the type used for enumerating the so far 75 languages which can be detected by Lingua.

const ( Afrikaans Language = iota Albanian Arabic Armenian Azerbaijani Basque Belarusian Bengali Bokmal Bosnian Bulgarian Catalan Chinese Croatian Czech Danish Dutch English Esperanto Estonian Finnish French Ganda Georgian German Greek Gujarati Hebrew Hindi Hungarian Icelandic Indonesian Irish Italian Japanese Kazakh Korean Latin Latvian Lithuanian Macedonian Malay Maori Marathi Mongolian Nynorsk Persian Polish Portuguese Punjabi Romanian Russian Serbian Shona Slovak Slovene Somali Sotho Spanish Swahili Swedish Tagalog Tamil Telugu Thai Tsonga Tswana Turkish Ukrainian Urdu Vietnamese Welsh Xhosa Yoruba Zulu Unknown )

func AllLanguages ¶

func AllLanguages() []Language

AllLanguages returns a sorted slice of all currently supported languages.

func AllLanguagesWithArabicScript ¶

func AllLanguagesWithArabicScript() []Language

AllLanguagesWithArabicScript returns a sorted slice of all built-in languages supporting the Arabic script.

func AllLanguagesWithCyrillicScript ¶

func AllLanguagesWithCyrillicScript() []Language

AllLanguagesWithCyrillicScript returns a sorted slice of all built-in languages supporting the Cyrillic script.

func AllLanguagesWithDevanagariScript ¶

func AllLanguagesWithDevanagariScript() []Language

AllLanguagesWithDevanagariScript returns a sorted slice of all built-in languages supporting the Devanagari script.

func AllLanguagesWithLatinScript ¶

func AllLanguagesWithLatinScript() []Language

AllLanguagesWithLatinScript returns a sorted slice of all built-in languages supporting the Latin script.

func AllSpokenLanguages ¶

func AllSpokenLanguages() (languages []Language)

AllSpokenLanguages returns a sorted slice of all supported languages that are not extinct but still spoken.

func GetLanguageFromIsoCode639_1 ¶

func GetLanguageFromIsoCode639_1(isoCode IsoCode639_1) Language

GetLanguageFromIsoCode639_1 returns the language for the given ISO 639-1 code enum value.

func GetLanguageFromIsoCode639_3 ¶

func GetLanguageFromIsoCode639_3(isoCode IsoCode639_3) Language

GetLanguageFromIsoCode639_3 returns the language for the given ISO 639-3 code enum value.

func (Language) IsoCode639_1 ¶

func (language Language) IsoCode639_1() IsoCode639_1

IsoCode639_1 returns a language's ISO 639-1 code.

func (Language) IsoCode639_3 ¶

func (language Language) IsoCode639_3() IsoCode639_3

IsoCode639_3 returns a language's ISO 639-3 code.

type LanguageDetector ¶

type LanguageDetector interface {

// DetectLanguageOf detects the language of the given text.

// The boolean return value indicates whether a language can be reliably

// detected. If this is not possible, (Unknown, false) is returned.

DetectLanguageOf(text string) (Language, bool)

// DetectMultipleLanguagesOf attempts to detect multiple languages in

// mixed-language text. This feature is experimental and under continuous

// development.

//

// A slice of DetectionResult is returned containing an entry for each

// contiguous single-language text section as identified by the library.

// Each entry consists of the identified language, a start index and an

// end index. The indices denote the substring that has been identified

// as a contiguous single-language text section.

DetectMultipleLanguagesOf(text string) []DetectionResult

// ComputeLanguageConfidenceValues computes confidence values for each

// language supported by this detector for the given input text. These

// values denote how likely it is that the given text has been written

// in any of the languages supported by this detector.

//

// A slice of ConfidenceValue is returned containing those languages which

// the calling instance of LanguageDetector has been built from. The entries

// are sorted by their confidence value in descending order. Each value is

// a probability between 0.0 and 1.0. The probabilities of all languages

// will sum to 1.0. If the language is unambiguously identified by the rule

// engine, the value 1.0 will always be returned for this language. The

// other languages will receive a value of 0.0.

ComputeLanguageConfidenceValues(text string) []ConfidenceValue

// ComputeLanguageConfidence computes the confidence value for the given

// language and input text. This value denotes how likely it is that the

// given text has been written in the given language.

//

// The value that this method computes is a number between 0.0 and 1.0.

// If the language is unambiguously identified by the rule engine, the value

// 1.0 will always be returned. If the given language is not supported by

// this detector instance, the value 0.0 will always be returned.

ComputeLanguageConfidence(text string, language Language) float64

}

LanguageDetector is the interface describing the available methods for detecting the language of some textual input.

type LanguageDetectorBuilder ¶

type LanguageDetectorBuilder interface {

// WithMinimumRelativeDistance sets the desired value for the minimum

// relative distance measure.

//

// By default, Lingua returns the most likely language for a given

// input text. However, there are certain words that are spelled the

// same in more than one language. The word `prologue`, for instance,

// is both a valid English and French word. Lingua would output either

// English or French which might be wrong in the given context.

// For cases like that, it is possible to specify a minimum relative

// distance that the logarithmized and summed up probabilities for

// each possible language have to satisfy.

//

// Be aware that the distance between the language probabilities is

// dependent on the length of the input text. The longer the input

// text, the larger the distance between the languages. So if you

// want to classify very short text phrases, do not set the minimum

// relative distance too high. Otherwise, you will get most results

// returned as Unknown which is the return value for cases

// where language detection is not reliably possible.

//

// Panics if distance is smaller than 0.0 or greater than 0.99.

WithMinimumRelativeDistance(distance float64) LanguageDetectorBuilder

// WithPreloadedLanguageModels configures LanguageDetectorBuilder to

// preload all language models when creating the instance of LanguageDetector.

//

// By default, Lingua uses lazy-loading to load only those language

// models on demand which are considered relevant by the rule-based

// filter engine. For web services, for instance, it is rather

// beneficial to preload all language models into memory to avoid

// unexpected latency while waiting for the service response. This

// method allows to switch between these two loading modes.

WithPreloadedLanguageModels() LanguageDetectorBuilder

// WithLowAccuracyMode disables the high accuracy mode in order to save

// memory and increase performance.

//

// By default, Lingua's high detection accuracy comes at the cost of

// loading large language models into memory which might not be feasible

// for systems running low on resources.

//

// This method disables the high accuracy mode so that only a small subset

// of language models is loaded into memory. The downside of this approach

// is that detection accuracy for short texts consisting of less than 120

// characters will drop significantly. However, detection accuracy for texts

// which are longer than 120 characters will remain mostly unaffected.

WithLowAccuracyMode() LanguageDetectorBuilder

// Build creates and returns the configured instance of LanguageDetector.

Build() LanguageDetector

// contains filtered or unexported methods

}

LanguageDetectorBuilder is the interface that defines any other settings to use for building an instance of LanguageDetector, except for the languages to use.

type UnconfiguredLanguageDetectorBuilder ¶

type UnconfiguredLanguageDetectorBuilder interface {

// FromAllLanguages configures the LanguageDetectorBuilder

// to use all built-in languages.

FromAllLanguages() LanguageDetectorBuilder

// FromAllSpokenLanguages configures the LanguageDetectorBuilder

// to use all built-in spoken languages.

FromAllSpokenLanguages() LanguageDetectorBuilder

// FromAllLanguagesWithArabicScript configures the LanguageDetectorBuilder

// to use all built-in languages supporting the Arabic script.

FromAllLanguagesWithArabicScript() LanguageDetectorBuilder

// FromAllLanguagesWithCyrillicScript configures the LanguageDetectorBuilder

// to use all built-in languages supporting the Cyrillic script.

FromAllLanguagesWithCyrillicScript() LanguageDetectorBuilder

// FromAllLanguagesWithDevanagariScript configures the LanguageDetectorBuilder

// to use all built-in languages supporting the Devanagari script.

FromAllLanguagesWithDevanagariScript() LanguageDetectorBuilder

// FromAllLanguagesWithLatinScript configures the LanguageDetectorBuilder

// to use all built-in languages supporting the Latin script.

FromAllLanguagesWithLatinScript() LanguageDetectorBuilder

// FromAllLanguagesWithout configures the LanguageDetectorBuilder

// to use all built-in languages except for those specified as arguments

// passed to this method. Panics if less than two languages are used to

// build the LanguageDetector.

FromAllLanguagesWithout(languages ...Language) LanguageDetectorBuilder

// FromLanguages configures the LanguageDetectorBuilder to use the languages

// specified as arguments passed to this method. Panics if less than two

// languages are specified.

FromLanguages(languages ...Language) LanguageDetectorBuilder

// FromIsoCodes639_1 configures the LanguageDetectorBuilder to use those

// languages whose ISO 639-1 codes are specified as arguments passed to

// this method. Panics if less than two iso codes are specified.

FromIsoCodes639_1(isoCodes ...IsoCode639_1) LanguageDetectorBuilder

// FromIsoCodes639_3 configures the LanguageDetectorBuilder to use those

// languages whose ISO 639-3 codes are specified as arguments passed to

// this method. Panics if less than two iso codes are specified.

FromIsoCodes639_3(isoCodes ...IsoCode639_3) LanguageDetectorBuilder

}

UnconfiguredLanguageDetectorBuilder is the interface describing the methods for specifying which languages will be used to build an instance of LanguageDetector. All methods return an implementation of the interface LanguageDetectorBuilder.

func NewLanguageDetectorBuilder ¶

func NewLanguageDetectorBuilder() UnconfiguredLanguageDetectorBuilder

NewLanguageDetectorBuilder returns a new instance that implements the interface UnconfiguredLanguageDetectorBuilder.

79

79 55

55 38

38 81

81 -

- 18

18